Imitation Gaming: Wargaming and Artificial Life

by Roger Mason PhD

Introduction

Turing experimented with chess as a system for experimenting with concepts of artificial life and game playing machines. In the past 68 years, many intelligent computer games have been developed. Turing could only dream of such easily programmable computers with enormous memory capacity. This article will go back to where Turing started. It will evaluate the possibility for developing artificial life in board wargames

Definitions

Throughout this paper the following terms will be employed. The terms in this list may be modified from their strict scientific definitions to meet the unique characteristics of wargaming and wargame design.

- Artificial Intelligence

The ability of an artificial entity to perform tasks associated with intelligent beings. (Also referred to as AI)

- Artificial Life

A study of life and lifelike processes and how computers and systems can reproduce this (Also referred to as ALife). - Autonomous Agent

An agent (individual or group) that can operate independently within the game. - Agent Abased Modeling

Agent Based Model (ABM) is a computation model used to simulate the actions and interactions of agents or groups. Simple behavioral rules direct the actions of these agents or groups. As the entities interact, complex behavior may emerge. - Preferences

A preference is a pre-determined action that an autonomous agent will take when certain environmental conditions occur. - Preference Layers

The combination of autonomous agent preferences that overlap to produce emergent behavior in a wargame.

Artificial Intelligence versus Artificial Life

Artificial intelligence and artificial life share a root connection. Both are artificial, and both are quite different. Artificial Intelligence (AI) seeks to imitate processes such as decision making, voice perception, language translation, and learning. Dartmouth professor John McCarthy first coined the term artificial intelligence in 1956. The goal of AI is to build machines capable of human reasoning.

Artificial Life (ALife) is the study of life and lifelike processes. This is accomplished through synthesis and simulation. Christopher Langton is credited with inventing the term Artificial Life. The goal of ALife is to develop simulations and systems capable of imitating intelligent behavior.

Artificial Life in Games

His questions focused on the possibility of a machine playing chess.

- Can a machine be programmed to follow the rules of chess?

- Can a machine solve chess problems given the position of the pieces?

- Can a machine play a reasonable game of chess?

- Can a machine learn from playing chess?

Turing believed this was possible and the current state of Intelligence development confirms it is true. Is this also true for games with ALife?

Games with ALife can be programmed. Programming provides rule sets for the autonomous agent to follow. The rule sets must be simple and limited. One useful technique is to assume the agent will follow the rules of the games as it moves and interacts.

Can an autonomous agent in an ALife game solve problems given the positions of the pieces? This question is more difficult because the response of the agent is dependent on their interaction or contact with other agents or opponents. In an AI based game, a bishop can observe an open rook in the opposite corner of the board and capture it. This is less likely in ALife games where the interaction involves proximity.

Can an ALife agent play a reasonable wargame? I think the answer is yes. Wargames are dissimilar to chess. Wargames do not have a ranking system for gameplay or player abilities. My first ALife based game involved managing riots. The game was intended to train law enforcement mobile field force commanders on crowd control techniques. The ALife agents were in two groups: demonstrators and anarchists. The terrain was a map of a town which included commercial, open spaces, and residential areas.

The game involved a large crowd of demonstrators with a smaller mix of anarchists. The Alife agents were programmed to move to open spaces. If the demonstrators came in contact with the anarchists, they became more active. The anarchists became more violent if they came in contact with the police. The crowd represented various groups of autonomous agents.

The live players were not aware they were playing a game system. After it was over, I asked the participants for their comments. They remarked about the subtle strategies employed by the demonstrators and the difficulty in countering their various moves. They were completely convinced a human player was making the demonstrator’s moves.

Can an ALife agent learn from its game experience? The answer is no. AI involves artificial intelligence “agents” that perceive their environment and take actions to achieve their goals. AI agents are multi-dimensional. They can store knowledge and learn from past experiences. ALife involves agents that appear to be alive by pre-programmed actions that mimic life. They are one dimensional. When the game is over the experiences are lost and capabilities of the agent default to their original setting.

Paper Machine Chess

The first game involving simple AI and ALife was Allan Turing’s Paper Machine Chess. Turing was working in the University of Manchester’s computer department. He wanted to design a programmable computer that could play chess. Turing felt that chess was a simple system that was recognizable by nearly everyone. The biggest obstacle was a lack of a machine capable of playing programmed chess.

Turing developed a series of algorithms based on the rules of chess. Besides the rules, the algorithms included preferences such as defending the king. The paper machine involved the algorithms and written out moves that could be applied based on the situation. Turing’s paper machine could react to given situations. The game combined simple AI and ALife techniques. The paper machine could not think or learn but it could play chess. It is the same concepts that make artificial life wargames possible.

Employing ALife in Games

Solitaire Play

The most obvious use of ALife is in solitaire games. An ALife opponent is entirely neutral. By neutral I mean the Alife agent is strictly bound by the pre-programmed actions or preferences. All an ALife agent knows is the rule set governing their actions and the boundaries of their operations. These parameters can be used to design a game opponent that focuses on a particular action or type of operation.

Multi-Dimensional Play

Traditional wargames often include only two opposing forces with a player commanding each of the armies. Armed conflict often involves a complicated mixture of forces whose loyalties can vary from self-serving to adaptable. This type of situation is often simulated by rules that allow players the opportunity to capture the loyalty of a neutral force.

Alife offers designers the option to include these forces as autonomous entities operating on multiple dimensions. The rule set can be programmed to direct the autonomous forces to move to an objective(s). The autonomous force can be directed to act on any nearby forces or occupy any location in the game. The ALife force can become an ally or an adversary.

Joseph Miranda and I recently included ALife in a new game called Cyber Crisis. This is a cyberwar simulation played in the open and dark webs. In the open web is a neutral autonomous network. In the dark web is an autonomous hacktivist organization. The two human players can attempt to ally themselves with, co-opt, or destroy these autonomous networks. The two players now must deal with a human opponent and two autonomous networks with individual preferences and actions.

Characteristics of Artificial Life in Games

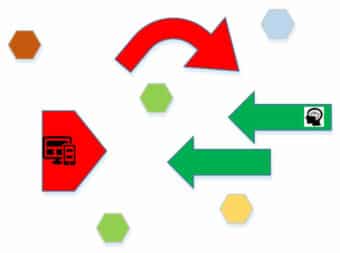

There are four characteristics common for ALife in wargames: opponent recognition, operational limitations, environmental sensitivity, and emergent behavior. The purpose of a wargame is to simulate combat operations. To do that the autonomous agents must be able to recognize and react to an opponent. The ALife agent’s preference layer can be affected by programming actions which cause them to react to circumstances. An example is a preference the ALife agent move adjacent to and attack any force that advances within a given distance.

One of the issues of ALife is operational limitations. If the game is long enough a human player may detect patterns which reveal the ALife agent’s preferences. ALife can be programmed to detect changes in the game environment. These can be programmed as secondary preferences

ALife games include emergent behavior. Emergent behavior is the behavior of a system that is not dependent on the actions of the individual parts. In emergent behavior, the relationship of the parts to one another is what impacts the overall system. The behavior comes about through potential interactions which influence the behavior of the system.

The emergent behavior appears as the various parts of the game come into contact. The behavior represents an evolution as the artificial life agents, environmental factors, and opponent moves interact. An example of emergent behavior could be several unique, autonomous agents moving independently toward an objective.

Examples of behaviors might include connectivity and self-organization. A game example is two independent ALife forces moving toward the same target. If their movement programming results in the forces coming within a certain proximity they will combine and reorganize as one force. Another example is combat where possible preferences and programmed actions interact with various opponents.

Foundations of Complexity

Kinetic and non-kinetic complexity can include when, where, how, or if the ALife agent will engage or avoid an opponent. Real world conflict often involves situations where players can change their loyalties. Artificial Life provides a way to model this behavior. For example, ALife agents can be moved into terrain impacting their preferences. ALife forces can be mobile or fixed.

A typical characteristic of ALife agents is their sensitivity to environmental changes. By introducing random events the environment may be affected on multiple planes. (Ex: weather causes movement to slow, the introduction of enemy reinforcements or combat effects are modified for one turn.) The greater the instability of the environment the greater the possibility of interaction with the ALife preference layers. Anything that optimizes possible game interactions will result in increased complexity.

There are possible levels of secondary complexity. It is possible the autonomous agent may take actions a human player might not select. For example, in a tactical situation the human player suddenly comes under attack from a smaller and very aggressive ALife force. If reversed the human player might not attack given the low probability of success. The ALife agent cannot optimize actions so they will act no matter what the probability for success is.

Secondary complexity can also occur when interactions temporarily increase due to random events. The random events may impact actions by the human player or modify preferences by the autonomous agent. When these possibilities are combined, great complexity in ALife game play is possible.

Designing a Wargame with Artificial Life

What Type of Scenario Topics Lend Themselves to ALife?

Do all scenario topics lend themselves to the use of ALife? ALife can be applied in any game scenario but some offer more opportunities for the ALIFE to function autonomously. Artificial life designs can work well with highly complex systems where the game scenario is focused on a narrow band of action. The technique also compliments games with independent forces seeking the same objective. It can also be employed with games involving an entity whose growth is based on environmental changes.

Evaluating the System

Once a scenario has been selected the systems involved must be evaluated. The purpose of the evaluation is to identify the key factors that influence the behavior of the system. Here are several examples.

You have decided to design a game about communicable disease. In your game you are considering modeling the illness as an ALife agent. The key behavioral factors could be water supply, hygiene, food, and climate. To ensure fidelity these factors should be included in the game design.

Perhaps you are designing a wildfire operations game modeling the fire as an ALife agent. The key factors would be weather, terrain, and fuel. These systems factors should be included in your design. By including these factors in the design of your autonomous agents it is possible to develop preference layers that accurately represent the system.

Preference development

A fundamental goal of any wargame is to frame a set of conditions causing opponents to interact offering opportunities for decision making. In a traditional historic scenario wargame this can be simple. (Ex: Texans defend the Alamo and the Mexican Army attacks.) To make the game function preferences for the autonomous agents must be developed. The preferences define what the agents will do.

The goal of the preferences is to encourage interaction. What maneuver or objective preferences will cause the opponents to come in contact? How will a terrain preference influence maneuver or the outcome of combat? One technique for developing preferences is agent based modeling.

Agent based modeling

Agent based modeling was invented to explain the collective behavior of autonomous agents. The agents are provided with simple behavioral rules that provide boundaries and direction for their actions. The behavioral rules serve as the decision making methodology for the agent. These rules are sometimes referred to as preferences.

Introducing an Attractor

In ALife games it is important to include an attractor. The attractor should be connected to the autonomous agents’ preferences. It should stimulate the greatest opportunities for interactions between the autonomous agents and the human player. In wargames a natural attractor is combat.

Night Fight: Artificial Life on the Eastern Front

Note: This section describes the design of an artificial life game published by Decision Games. I want to thank Doc Cummins and the Decision Games team for their hard work on this game. I especially want to commend Eric Harvey for the game development and graphics, Joseph Miranda for pulling the rule set together, and Ty Bomba lending me his copy of the US Army study on German night fighting in World War Two.

Question

This project began with a question. Is it possible to develop a tactical wargame employing ALife? I began searching my military history bookshelves looking for a suitable battle.

Scenario

Paul Carell’s book Scorched Earth is a study of the war on the Eastern Front of World War Two from 1943 to 1945. The book provides details about the Battle of Kursk. One story that stood out to me was the night attack on the fortified town of Rzhavets. The Germans used the night time confusion to infiltrate a column of tanks six miles into the Russian lines.

I did some further investigation about the battle and read the US Army study on night combat during World War II. I reached the following conclusions:

The Russian forces had limited command and control. At night, units were often mixed together with very little organizational cohesion. Russian units were routinely ordered to stand in place wherever they were located when night fell. Units attempting to move up were ordered to stay on the roads. If fighting broke out the mobile units were expected to move to the area and engage the enemy. The Russians were unable to maneuver effectively at night so they tended to attack head-on when enemy contact occurred.

The Germans had much better command and control. Most of their armored vehicles had radios which facilitated their ability to maneuver. German units could move cross country and were flexible in where and how they engaged the enemy.

System

The next step in designing the game was to develop an overview of each system. The Russian tactical system was rigid while the Germans were much more flexible. The Russian force would be represented by ALife.

Russian Forces

Divided between stationary and mobile.

The mobile forces were road bound.

Any Russian unit contacting a German unit would attack immediately.

Russian mobile units would move to the nearest combat and engage.

German Forces

German units were mobile.

The Germans had freedom of maneuver including cross-country travel.

German forces could attack or retreat at their discretion.

Model

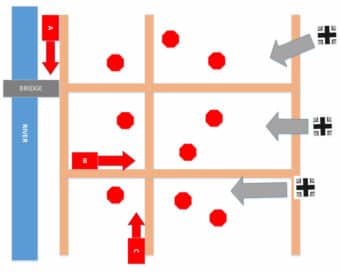

This is a tactical level game (Ex: one tank playing piece represents one armored vehicle.) The Russian force is a mixture of armored vehicles, artillery, machine guns and infantry. This was designed to simulate the confusion of Russian units during the night. The German player is given the types of units engaged in the historic battle. The German player may select their mixture of units based on a limited number of resource points.

Game Mechanics

The Russian units were placed face down. Units were randomly selected and placed on each of the Russian stationary unit locations on the map. Russian units were drawn for three autonomous columns. These were placed on one of three predesignated column starting points. The Russian columns move following their preferences.

The Germans can organize their forces as they wish. They may maneuver and attack as they desire. This includes the ability to retreat. The Germans are moving under cover of darkness. They must conduct a die roll to see if they are spotted by the Russian units.

Preferences

The Russian preferences are:

- All stationary units are fixed and cannot move. They engage any German unit they can spot.

- Mobile columns move at a fixed rate. When they reach a crossroads they must conduct a die roll to determine what direction they should go. If they reach the edge of the map they must reverse direction.

- If combat occurs the Russian mobile columns immediately take the shortest road route to the fighting.

- Any mobile column spotting a German unit must immediately engage it.

Attractor

The attractor was combat. The German objective is to cross the map as quietly as possible avoiding Russian units. If they are spotted and combat occurs the Russian mobile columns immediately begin to converge on that location.

Complexity

There are several layers of complexity in the game.

Fog of War

The German player can see the flipped over, stationary Russian pieces. Since the pieces were randomly placed, they might represent a truck or a lethal anti-tank gun. There is no way to assess the strength of the Russian defenses until they are revealed during combat. The same is for the Russian mobile columns. The composition of the force and the position of each unit in the column is random and remains hidden until combat occurs. The Germans cannot determine the threat level of the column until the composition is revealed during combat.

Concealed Movement

The Germans have the ability to conduct limited, concealed movement. One of the random effects in the game is the spotting rule where the German player must determine if they have been seen.

Ability to Self-Organize

The German play can begin the game with his forces in any formation they desire. These formations can be reorganized as the tactical situation develops. The Russian autonomous columns can join one another if they come in contact during their random road movement. This permits two columns to reorganize into one. If the lead units of a mobile column are destroyed the next units can move forward to attack. This behavior allows stronger units to move into combat.

Outcome

In repeated playtests similar outcomes were achieved. The German player was able to penetrate into the stationary Russian positions. Eventually, the German units are spotted and fighting occurs. As soon as combat occurs the Russian columns begin to converge from their random locations on the board. German attempts to maneuver results in more Russian stationary units becoming engaged.

The Russians suffer with the problems of road bound columns. The composition of the column is random so the first mobile unit to engage the Germans may be the weakest. This results in high Russian losses. The converging mobile columns means the Russians are getting stronger as the Germans attempt to maneuver.

The game results are very similar to the historic incident. In the 1943 battle the Germans were able to penetrate deep into the Russian lines. They were eventually spotted and fought increasingly stronger Russian mobile and stationary units. The Russians were able to destroy the bridge before the Germans could capture it.

The game exhibited characteristics of artificial life. The mobile columns are sensitive to changes in the environment by their maneuver reaction to combat. They automatically coordinate combat action with stationary units whose preference is to remain in a fixed location. This results in emergent behavior where the individual autonomous Russian forces come together to block any attempt by the German player to penetrate their defenses.

Summary: Fooled by the Interlocutor

In Alan Turing’s Imitation Game an interrogator evaluates written statements by a computer and a human, the interlocutors. The purpose of the game is to see if the computer can intelligently converse, fooling the interrogator. The more convincing the answers the easier it is to attribute intelligence to the machine.

One of the values of wargames is accurately simulating armed conflict. ALife provides a fresh design tool that increases the realism of the simulation. Like any other tool, it has limitations. When combined with other design techniques it can provide a new opportunity to model and simulate warfare.

Alan Turing concluded his paper on Computing Machinery and Intelligence with the following quote. He wrote,” We can only see a short distance ahead, but we can see plenty there that needs to be done. I believe the potential of board wargames has yet to be fully explored. Artificial life is just one avenue of exploration. Turing was right. Regarding board wargaming, plenty needs to be done.